To begin with, what is good science? Good science is based on examination of empirical or measurable evidence, with the findings subject to specific principles of reasoning (a.k.a. the ‘

scientific method.’) This is where disciplines masquerading as science fall foul. Here belief-systems like homeopathy and chiropractic cannot be said to be "sciences"; they are pseudoscientific in that they are presented as scientific but they do not adhere to evidence-based studies. These should be given a wide berth.

Inside a Chinese homeopathy shop

dorklepork

Good science draws a distinction between facts, theories, truths and opinions. A fact is something generally accepted as "reality" (although still open to investigation); it contrasts with "the absolute truth," which is not science as it cannot be challenged. A theory is based on an objective consideration of evidence, and is different to an opinion. An opinion is subjective. For example (using a fictitious person called Fred):

• To say Fred is an affable person is an opinion;

• If Fred says if he drops his glass on the floor it will break is a theory; although one which can be tested by the glass being dropped multiple times onto different surfaces to see how often it breaks, and whether this is more likely with concrete or carpet;

• If Fred jumps from a diving board, he predicts he will fall downwards into the water due to gravity. This statement is a fact and a theory, something with a high probability of being correct, and based on scores of research dating back to Isaac Newton.

To produce good science a "good scientist" is required. A good scientist is not simply someone who has studied a scientific subject and who is keen to learn. A good scientist will always have about them a degree of uncertainty and will be prepared to question, and acknowledge there is always something to learn. A good scientist will never claim to know all there is to know about his or her subject.

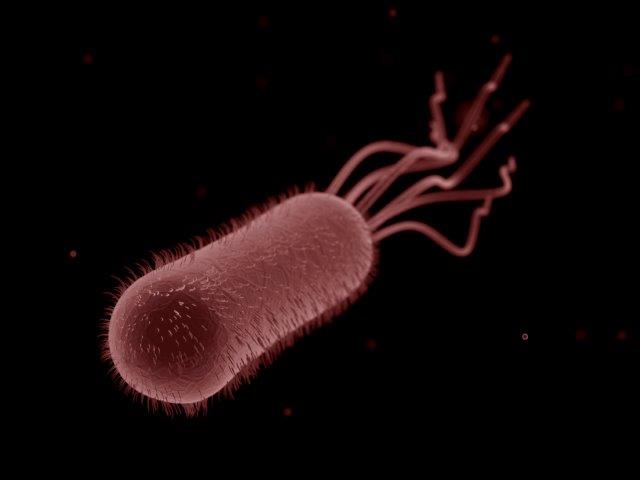

A microbiologist undertakes molecular testing into an unknown bacterium. Photograph taken in Tim Sandle's laboratory.

The foundation of good science is independent research. There are signs that this is becoming more commonplace. As to why this is necessary, the

British Medical Journal found 97 percent of studies sponsored by a company delivered results in favor of the sponsor. By declaring interests up front, a degree of assurance prefixes the study.

Where the results of a scientific study can be closely scrutinized, researchers are more likely to be surer of their findings and less willing to present weak research. A recent trend in the scientific world has been towards open access. This is where science papers are made available for any person to read for free and not hidden behind a pay wall. Accessing a journal that requires an upfront payment isn’t cheap and if you want to look at several papers, this starts to go beyond the reach of most people. Not all journals have adopted the open access model, although more are doing so. This can only be encouraged.

Linked to open access and this is still not as common as it should be, is open access to the experimental data. Many medical doctors, for example, work with evidence based medicine. Not to be able to see the full-set of results from a clinical trial hampers their assessment of a drug’s efficacy. The

biggest culprits remain pharmaceutical companies; although government run health and science agencies have become much better in recent years. To help push through access to clinical trial data, a campaign website has been set up. Called “

All Trials”, the site has succeeded in convincing some journals to only accept papers where there is full disclosure of data. To encourage more openness, the site also

carries a petition.

Another area to improve findings is with ensuring that research papers, which undertake "

systematic reviews", are sufficiently wide ranging in their scope. Some literature reviews cherry-pick certain facts and figures to suit an argument. The better review articles consider and use a range of databases and assess all of the literature on a given subject. For example, a review of Czech studies on mouse models of cancer would not be as comprehensive as a review of cancer cases in animals and humans in all of Europe.

Science journalists can also do better. The perennial problem for the science journalist is "how can I sum up this topic in just one or two sentences that will make audiences want to read more?" Sometimes the scientist behind the research will like this and enjoy seeing their ideas reaching a large audience. At other times, the scientists might be thinking: "how dare you try and reduce a body of research into a shallow soundbite?" Science journalists must try to present the facts; avoid exaggeration; but also engage their audience.

To close out, just as the previous article concluded with a checklist for spotting bad science, this article ends with the foundations of ‘good science’:

1. Good science starts with a question worthy of answering.

2. This question should lead into some research, to look at what has been studied before. Research should always build-up on what has gone before. Scientifically recognized mechanisms should be employed.

3. Research should use natural mechanisms. There is no recorded case of where a non-natural explanation has proved scientifically useful. For example, a study that looked into drinking 30 cans of Coke in 10 minutes concluded it made people feel ill, this would be something that has strayed well away from what might really happen in society.

4. From the question, a hypothesis should be generated upfront. For example, “smoking tobacco does not cause cancer”. Having set this, scientists would then go off to prove whether or not this this hypothesis is true or false.

The best hypotheses lead to predictions that can be tested in various ways. Based on the recommendations of the philosopher

Karl Popper and his theory of "falsifiability", these predictions should be tested experimentally; and the experiment should attempt to disprove the assumption rather than prove it. So, with the cancer example, scientists would attempt to investigate the question “smoking tobacco does not cause cancer” by trying to prove that it does (and if the question had been the other way around, the scientists would be attempting to prove smoking does not cause cancer.)

5. Similarly, the research should be free of dubious assumptions.

6. Having gone through these though-processes the experiment should then be designed. Asking questions like: how much data will be needed?; For how long will the experiment run for?

7. Where test subjects are used, the subjects — especially people — should be representative of the larger population that the results are intended to apply to. Note should be made of gender and demographic differences.

8. When an experiment is run, there should be controls. In a chemical study this might be something known not to react; in clinical trials, this will be the inclusion of placebo. For human research studies, the gold standard is a randomized control trial (this is a type of study where the scientist randomly assigns participants to either receive the treatment/exposure or not, and is ideally unaware which participants are receiving the treatment).

9. Good experiments are repeated many times, and the results subject to a robust statistical analysis. If an experiment cannot be repeated to produce the same results, this implies that the original results might have been in error.

10. The collected evidence should be peer reviewed by the scientific community. These external experts should give their views anonymously.

11. Findings should be cautious and include study limitations. A theory may or may not be formed.

12. One study inevitably leads onto another. The evidence will rarely be the end of the inquiry; instead it should serve as the basis for further studies.

Keeping these criteria in mind, scientists can produce better research and general community will have greater confidence in the information presented. Readers wanting to verify the findings of a science paper are worthwhile can use the key points above to make an assessment.